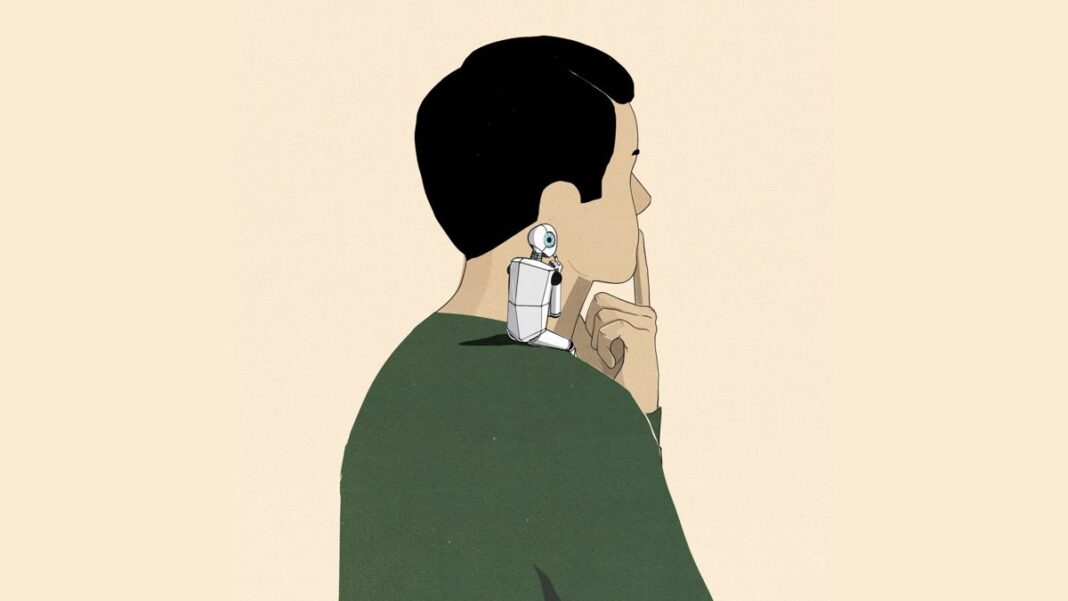

AI assistants may be able to change our views without our realizing it. Says one expert: ‘What’s interesting here is the subtlety.’

When we ask ChatGPT or another bot to draft a memo, email, or presentation, we think these artificial-intelligence assistants are doing our bidding. A growing body of research shows that they also can change our thinking—without our knowing.

One of the latest studies in this vein, from researchers spread across the globe, found that when subjects were asked to use an AI to help them write an essay, that AI could nudge them to write an essay either for or against a particular view, depending on the bias of the algorithm. Performing this exercise also measurably influenced the subjects’ opinions on the topic, after the exercise.

“You may not even know that you are being influenced,” says Mor Naaman, a professor in the information science department at Cornell University, and the senior author of the paper. He calls this phenomenon “latent persuasion.”

These studies raise an alarming prospect: As AI makes us more productive, it may also alter our opinions in subtle and unanticipated ways. This influence may be more akin to the way humans sway one another through collaboration and social norms, than to the kind of mass-media and social media influence we’re familiar with.”

Researchers who have uncovered this phenomenon believe that the best defense against this new form of psychological influence—indeed, the only one, for now—is making more people aware of it. In the long run, other defenses, such as regulators mandating transparency about how AI algorithms work, and what human biases they mimic, may be helpful.

All of this could lead to a future in which people choose which AIs they use—at work and at home, in the office and in the education of their children—based on which human values are expressed in the responses that AI gives.

And some AIs may have different “personalities”—including political persuasions. If you’re composing an email to your colleagues at the environmental not-for-profit where you work, you might use something called, hypothetically, ProgressiveGPT. Someone else, drafting a missive for their conservative PAC on social media, might use, say, GOPGPT. Still others might mix and match traits and viewpoints in their chosen AIs, which could someday be personalized to convincingly mimic their writing style.

By extension, in the future, companies and other organizations might offer AIs that are purpose-built, from the ground up, for different tasks. Someone in sales might use an AI assistant tuned to be more persuasive—call it SalesGPT. Someone in customer service might use one trained to be extra polite—SupportGPT.